Long post (as most of mine are…)

Reaching out to the community for advice on an internal controversy regarding ETL approach for mapping source_concept_ids. Because both approaches have strengths/weaknesses, interested in hearing from folks who picked one way versus the other and how it has worked out in actual practice.

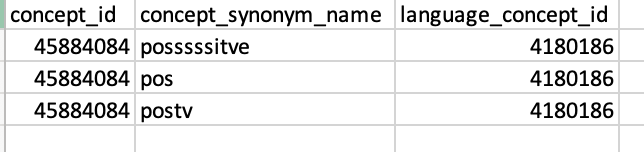

Approach 1: For every lexicographical variation of a source_value where there does not yet exist a nonstandard or standard concept, create a custom, nonstandard concept_id and use as source_concept_id. Then create the appropriate mapping to the semantically correct standard concept_id. Thus, for every variation of “positive”, “pos”, “postv”, posssssitve", etc, there is a separate nonstandard concept_id stored in source_concept_id but all of these source_concept_ids map to the one semantically identical standard concept_id. Same with different lexicographical variations for conditions, observations, measurements.

Approach 2: For every lexicographical variation of a source_value, either use an existing concept (standard or nonstandard) or create a new custom concept that captures the common semantic meaning of the variations and map all of these variations to the semantically identical concept as the source_concept_id. Then map that source_concept_id to the correct standard concept_id.

In Approach 1, there is no semantic harmonization occurring at the source_concept_id step – there is 1:1 fidelity with the source_value. In Approach 2, there is some degree of semantic harmonization that may be more “liberal” (more allowed values) than what is allowed in the final standard concept_id vocabulary. But there no longer is a 1:1 fidelity with the source_value.

There is much more that could be said here, including implications for implementation and the location mapping efforts in the ETL tool chain but I’ll stop here to focus only on the question of using the mapping from source_value into source_concept_id for some degree of semantic harmonization or keeping fidelity with lexicographical variations in source_values. In both settings, you end up with a standard concept in concept_id but how best to leverage the source_concept_id mapping is where our internal controversy resides.