Just to make a couple of clarifying points so this doesn’t devolve into “X is better than Y”.

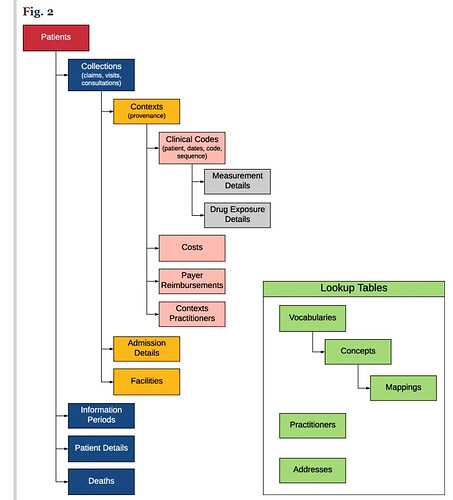

GDM was originally designed as a waypoint in the ETL process from raw data to the OMOP CDM. That is how we started – we wanted to try and make the OMOP ETL process easier. We wanted to be able to write an ETL specification document and then automatically operationalize the ETL specification “document”. In other words, we would run a program over the ETL spec and this would generate all of the code we need for the ETL. (This is a simpler explanation, but close enough for this discussion.) This is why we almost always use the OMOP concept ids in GDM. The logic is that with those, it should be a relatively straightforward process to move things to the OMOP structure once the concept ids are in place.

Another issue for us when we created GDM was that we were working with FDA Sentinel CDM projects at the time. So going from GDM both to OMOP and to Sentinel was much easier for us than doing full ETLs from raw for each one separately.

Once we got everything together, we realized that there were some reasons that, for us, it made more sense to use GDM as our data model. As @jenniferduryea mentioned, we prefer to use code lists specified in the source vocabulary and GDM is more focused on this approach. We also had some oncology data that didn’t really work well with OMOP at the time, and hence we shifted our approach to GDM for our internal software (Jigsaw).

Every data model has strengths and limitations, including GDM and OMOP. But these were designed with much of the same thinking. The fundamental difference is in our focus on the source vocabularies versus the standard vocabularies. This is likely related to the fact that we mainly use claims data and EHR and registry data with well-documented vocabularies (e.g., CPRD and SEER).

Also, keep in mind that the OMOP community is a great source of help and documentation. GDM was built by a small company that may, or may not, be able to provide detailed guidance in every situation.